For engineering directors and platform leaders

Evaluate AI tools against what you actually need

Every vendor claims to be the complete AI platform. None of them are. Start from your capability requirements, then find which tools fit — not the other way around.

Sound familiar?

If you're responsible for the AI platform, these probably hit close to home:

Endless vendor POCs and every tool claims to do everything. You have no neutral reference to compare against.

You bought 8 tools that each do one thing well, but they don't compose into a coherent architecture.

You can't see which capabilities your team can actually operate versus which ones need new hiring or training.

POCs became production. You're now maintaining 5 different approaches to the same problem.

How it works

A structured comparison workflow in three steps:

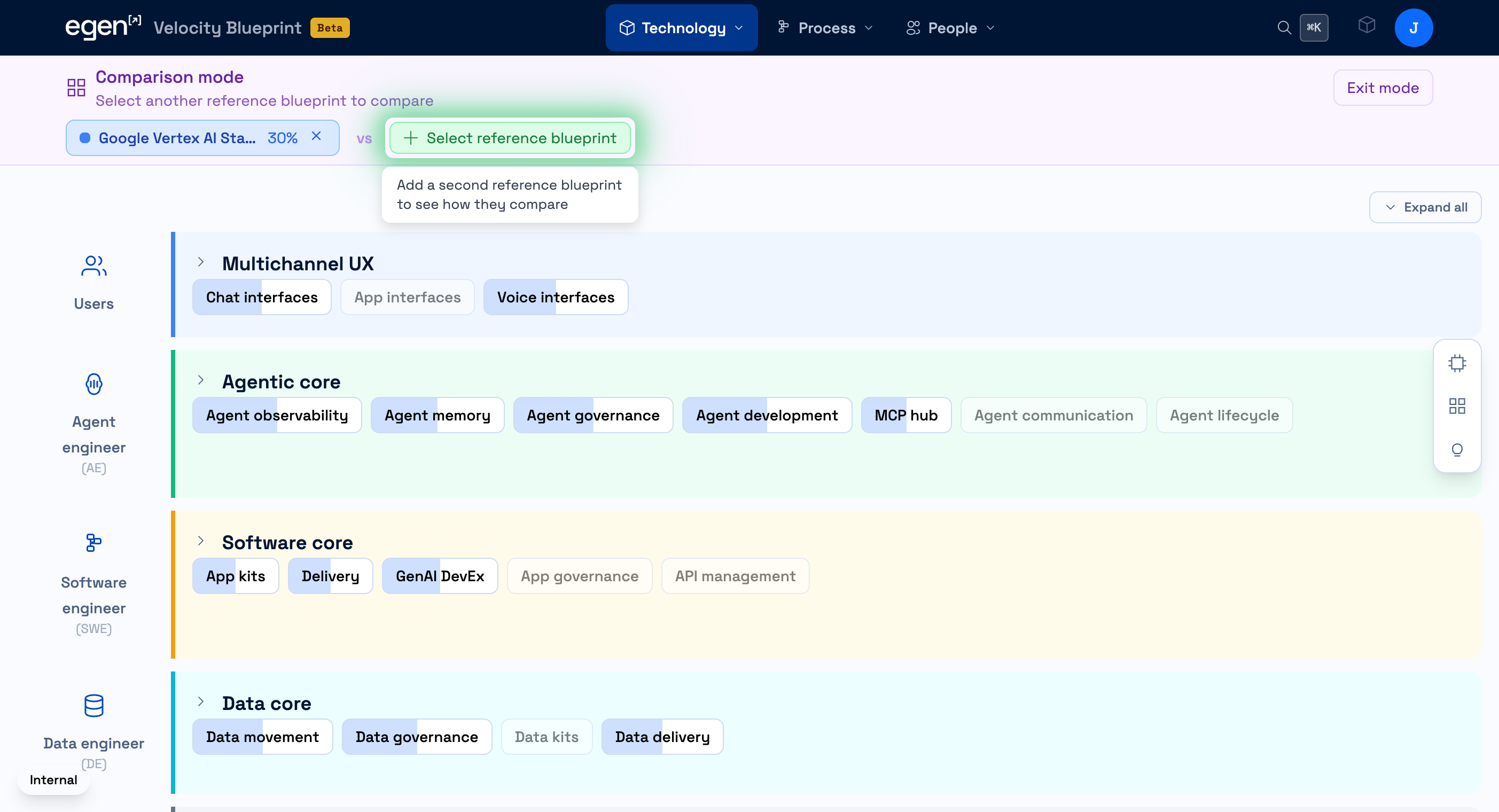

Select your first reference blueprint

Browse published reference blueprints — curated technology combinations for common AI patterns like RAG pipelines, multi-model orchestration, or data platforms. Select one to see its coverage across all 170+ capabilities.

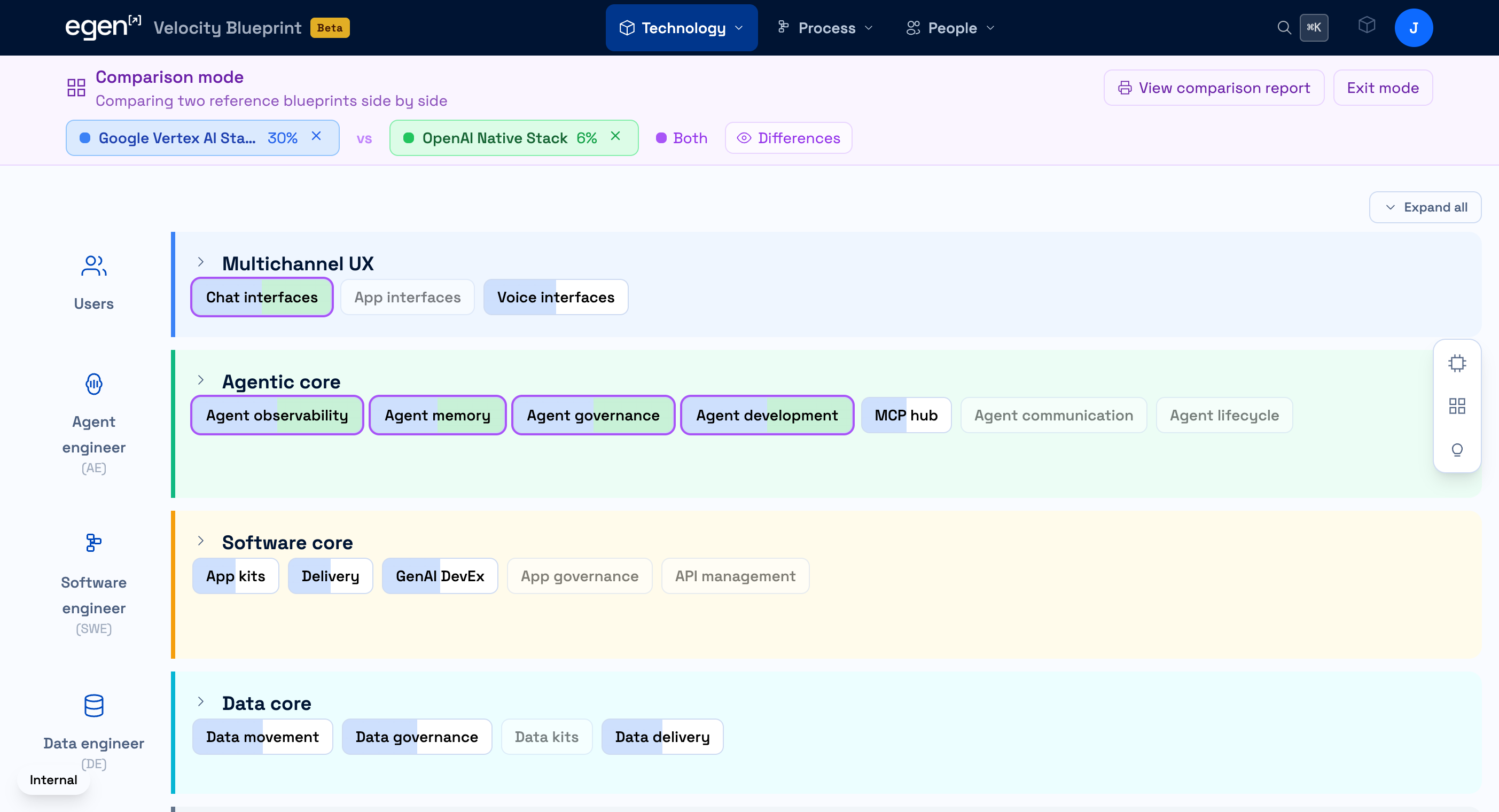

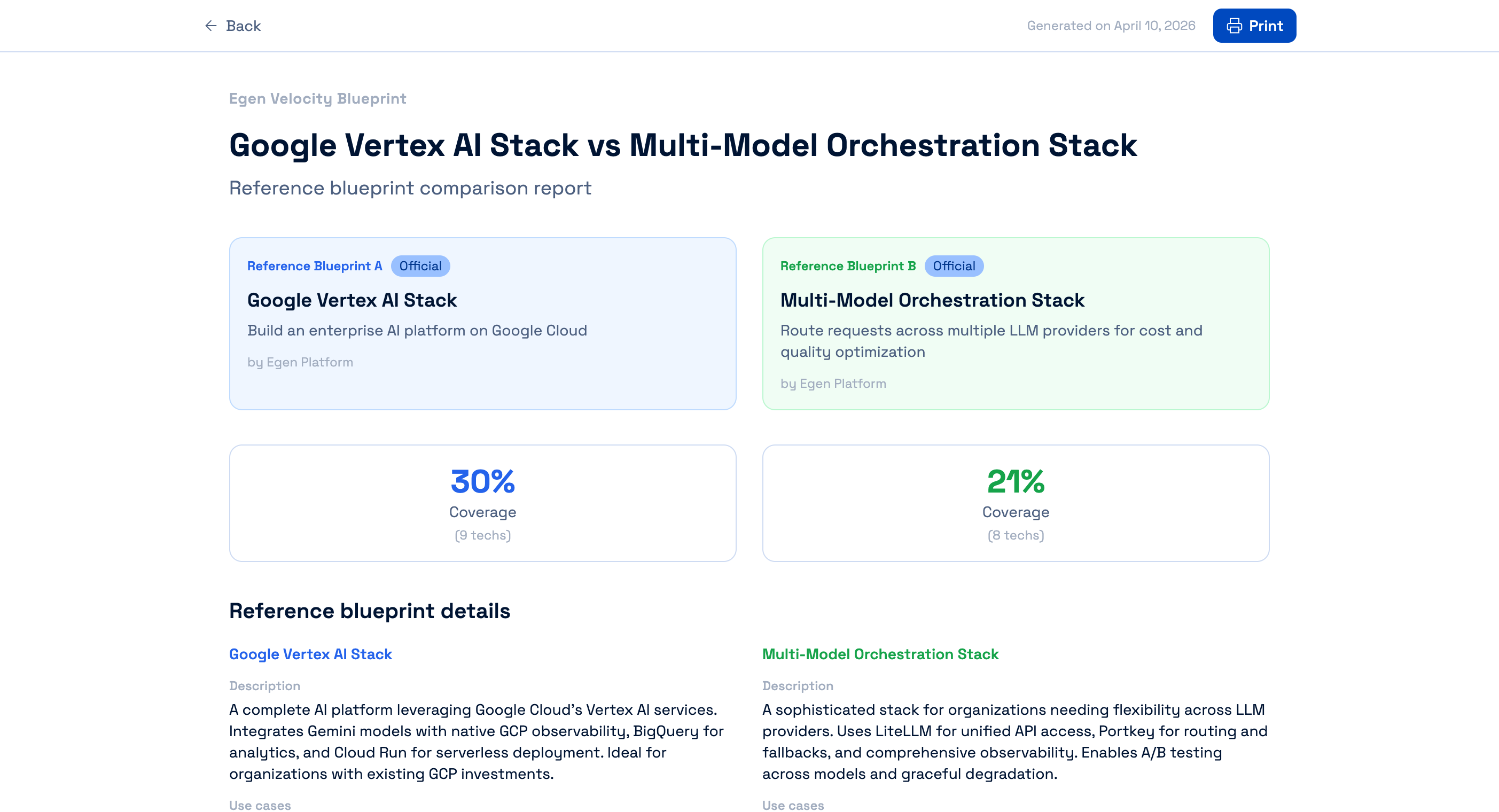

Add a second blueprint and compare side-by-side

Add a second reference blueprint to see both compared layer by layer. Click into each to drill down on individual technologies and see exactly where they overlap and where they diverge.

Your next vendor conversation starts here

Walk into the meeting knowing exactly which capabilities you need covered. That changes the conversation from "show me your features" to "show me how you fill these gaps."